Navigating container orchestration for websites and applications

Today’s organizations face major challenges in effectively deploying and managing their online services, applications, and websites. In recent years, with interest in infrastructure technologies such as Kubernetes and Docker surging, container orchestration solutions have emerged as a core technology to help overcome challenges and move to a more modern approach. While many organizations have adopted some level of containerization within development, adoption for production remains relatively low, with organizations citing a lack of expertise and increasing security challenges as barriers.

Today, many organizations are shifting to cloud hosting platforms for application development and deployment, including container orchestration, as an alternative to Kubernetes. This provides the complete infrastructure needed for production, including security, infrastructure, load balancing, and high availability–all fully supported by the cloud hosting platform, also called Platform-as-a-Service (PaaS).

As cloud hosting platforms vary significantly, we will explain the benefits and technologies of containers, container orchestration, and Platform-as-a-Service. This includes what each technology does and doesn’t provide. We will cover some of the functionality necessary for organizations to leverage containers in production.

The benefits of containerization for developers

Containers are built around core Linux kernel (LXC Containers and cgroups) and OS features that allow complete native isolation of an application’s view of the operating environment, including process trees, networking, user IDs, and mounted file systems. In other words, the Linux kernel itself provides a way of securely virtualizing an application without the need to spin-up a virtual machine.

As a result, containers offer developers a solution to those in-application issues. If an application is deployed within a container, its view of the world is always the same. The segregation/sandboxing means another application can’t overwrite the memory being used, and an application runs the same regardless of the underlying hardware and infrastructure used for the cloud. Containers can be useful for individual developers and they can manage/script on a small scale by numerous mechanisms. Also, proprietary container technologies, such as Docker or rkt, have become popular to help associate applications with containers and services.

Using Kubernetes to orchestrate containers

Kubernetes (often referred to as K8s) is one of the most popular frameworks for container orchestration.

Kubernetes can be used to build a platform that then allows containers to be operated, deployed, moved, and scaled to maintain the desired state of the application and end service. The application itself runs on a distributed system of cloud and physical servers, using orchestration to ensure the resources are available and used optimally for the whole system, balancing and adjusting according to the needs of the applications.

Theoretically, this means that with the right triggers and monitoring a web application can respond to changing demands on it. If demand surges, for example, additional copies of a container can be spun up in seconds in geographies nearer to the demand. This, however, relies on building the infrastructure and tools within Kubernetes or leveraging and integrating the right third-party tools and functionality.

How to build a platform with Kubernetes

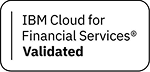

To leverage container orchestration in production you first need to build a platform, typically with functionality to ensure the platform provides:

- High availability

- Security updates

- Environment cloning for developers to test their changes

- Backups

- Automated generation of staging clusters

- Web application firewalls

- Storage allocation and purchasing

- Content delivery network

- Monitoring and feedback

Kubernetes itself is not a platform; it’s a framework within which you can build one. The types of products and services that are added to Kubernetes can give you an idea of what’s needed:

- storage/host management (AWS, Ceph, Terraform)

- container templating/service registration (Docker, Rancher, Helm, JFrog)

- logging/metrics (Grafana, fluentd)

- code building and publishing (Jenkins, GitHub)

- load balancing (Netscaler/Citrix ADC).

To build a platform around Kubernetes, you will need not only to evaluate, license, and support numerous tools and technologies, but also maintain, license, and support those components and their interactions.

Beyond maintaining and patching an infrastructure around Kubernetes, a production deployment needs significant development, tooling, and processes to integrate technologies that manage containers and their contents. While containers make it easier for developers to build applications faster, much of the software can contain vulnerabilities when developers end up relying on outdated components that haven’t been updated/patched or are unsupported. Later we will cover how these challenges can be overcome by leveraging declarative architecture features within a cloud hosting platform or Platform-as-a-Service (PaaS).

Simplifying Kubernetes complexity with PaaS

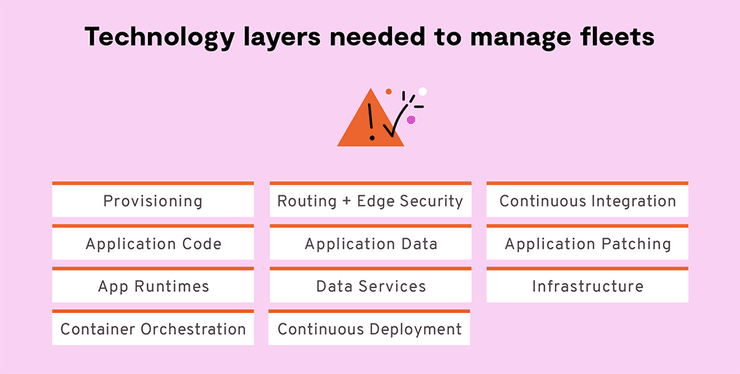

If your teams spend too much time evaluating, discussing and implementing Kubernetes architecture components (such as Pods, Labels, Replica Sets, and Config Maps) or debating whether and how to combine Rancher with Helm and RabbitMQ, then a Platform-as-a-Service (PaaS) could suit your organization.

A PaaS provider takes on the overhead of building and supporting the development platform and all the components. Developers and architects are then free to focus on developing and improving their websites and applications.

Platform.sh helps scale and secure all your websites

Platform.sh supports development stacks that include PHP, Drupal, Strapi, WordPress, Python, Laravel, Node.js, Magento, and many more. We power website portfolios consisting of thousands of websites and applications for brands and organizations such as:

The British Council

In just three months, Platform.sh helped The British Council migrate its in-house system to Platform.sh. The system now supports 1,000 staff across 120 multilingual sites with 115 million users in 110 countries.

The University of Missouri

Working with Platform.sh, the University of Missouri consolidated hundreds of websites and 13 different content management systems.

Regardless of the stacks our customers use, we focus on measurable business value features and practical use case scenarios. We enable customers deploying websites to:

- Patch all their sites centrally

- Secure their data with fully managed database services

- Ensure governance over technology and processes

- Provide high availability SLAs (up to 99.99%)

- Rely on 24x7 global support

Focusing on the specific use cases and productivity features that a platform needs to provide, developers can often help an organization expose the security and reliability issues of in-house Kubernetes management.

Guides to demystifying Kubernetes or containers offer insight into the fundamental soundness of those technologies. But designing, documenting, and maintaining a working system is a very different matter. Managed Kubernetes services offer a wide range of individual components, but it’s up to the individual organization to figure out how to achieve high availability backups and how to integrate load-balancing or security gateways.

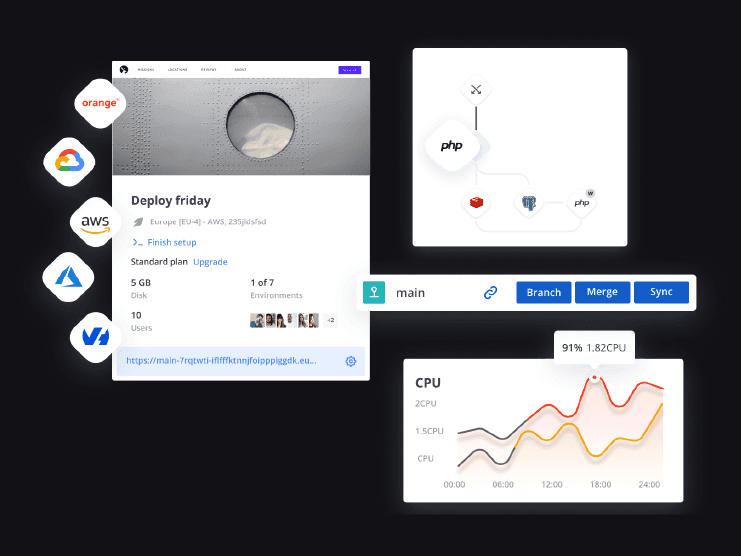

Platform.sh: The benefits of an integrated PaaS solution

Key points:

- Platform.sh leverages standard native containers and provides orchestration.

- A vast amount of functionality is provided beyond container orchestration.

- Key tools and applications are integrated into the platform, with the licensing and patching responsibilities undertaken by the platform.

- Platform.sh provides a choice of infrastructure, sourced and priced at bulk (e.g., AWS, Azure).

Next steps

Does a bespoke Kubernetes solution meet your requirements? Platform.sh has experts available that can help walk you through your unique situation and provide a cost analysis of switching to Platform.sh.

Talk with an Expert Switching to Platform.sh can help IT/DevOps organizations drive 219% ROI

Switching to Platform.sh can help IT/DevOps organizations drive 219% ROI Organizations, the ultimate way to manage your users and projects

Organizations, the ultimate way to manage your users and projects